概述

本文是结合 github:OpenAI Function Calling 101在 Azure OpenAI 上的实现:

Github Function Calling 101

如何将函数调用与 Azure OpenAI 服务配合使用 - Azure OpenAI Service

使用像ChatGPT这样的llm的困难之一是它们不产生结构化的数据输出。这对于在很大程度上依赖结构化数据进行系统交互的程序化系统非常重要。例如,如果你想构建一个程序来分析电影评论的情绪,你可能必须执行如下提示:

prompt = f'''

Please perform a sentiment analysis on the following movie review:

{MOVIE_REVIEW_TEXT}

Please output your response as a single word: either "Positive" or "Negative". Do not add any extra characters.

'''

这样做的问题是,它并不总是有效。法学硕士学位通常会加上一个不受欢迎的段落或更长的解释,比如:“这部电影的情感是:积极的。”

prompt = f'''

Please perform a sentiment analysis on the following movie review:

{The Shawshank Redemption}

Please output your response as a single word: either "Positive" or "Negative". Do not add any extra characters.

'''

---

#OpenAI Answer

import nltk

from nltk.sentiment import SentimentIntensityAnalyzer

# Define the movie review

movie_review = "The Shawshank Redemption"

# Perform sentiment analysis

sid = SentimentIntensityAnalyzer()

sentiment_scores = sid.polarity_scores(movie_review)

# Determine the sentiment based on the compound score

if sentiment_scores['compound'] >= 0:

sentiment = "Positive"

else:

sentiment = "Negative"

# Output the sentiment

sentiment

---

The sentiment analysis of the movie review "The Shawshank Redemption" is "Positive".

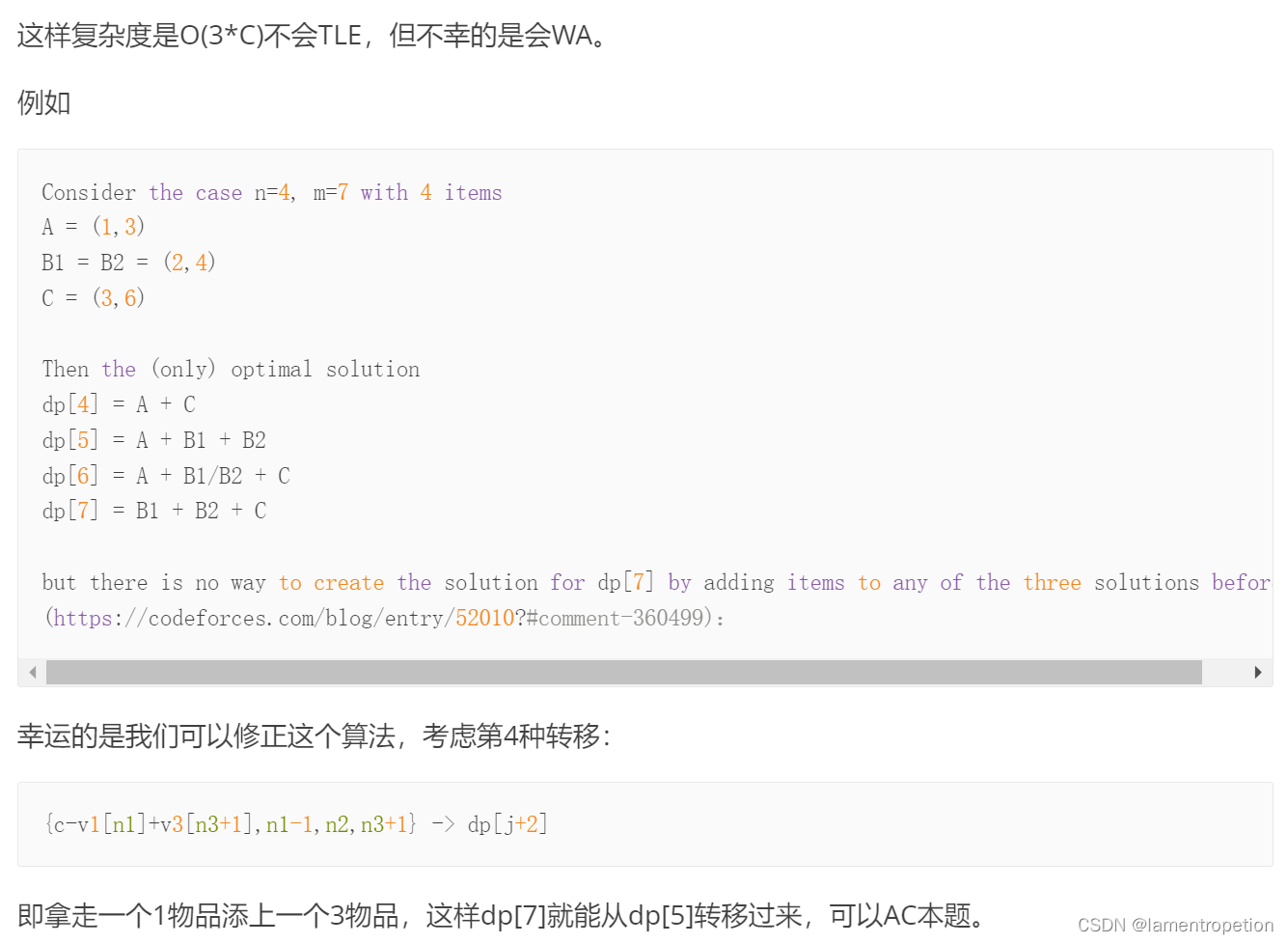

虽然您可以通过正则表达式得到答案(🤢),但这显然不是理想的。理想的情况是LLM将返回类似以下结构化JSON的输出:

{

'sentiment': 'positive'

}

进入OpenAI的新函数调用! 函数调用正是上述问题的答案。

本Jupyter笔记本将演示如何在Python中使用OpenAI的新函数调用的简单示例。如果你想看完整的文档,请点击这个链接https://platform.openai.com/docs/guides/gpt/function-calling

实验

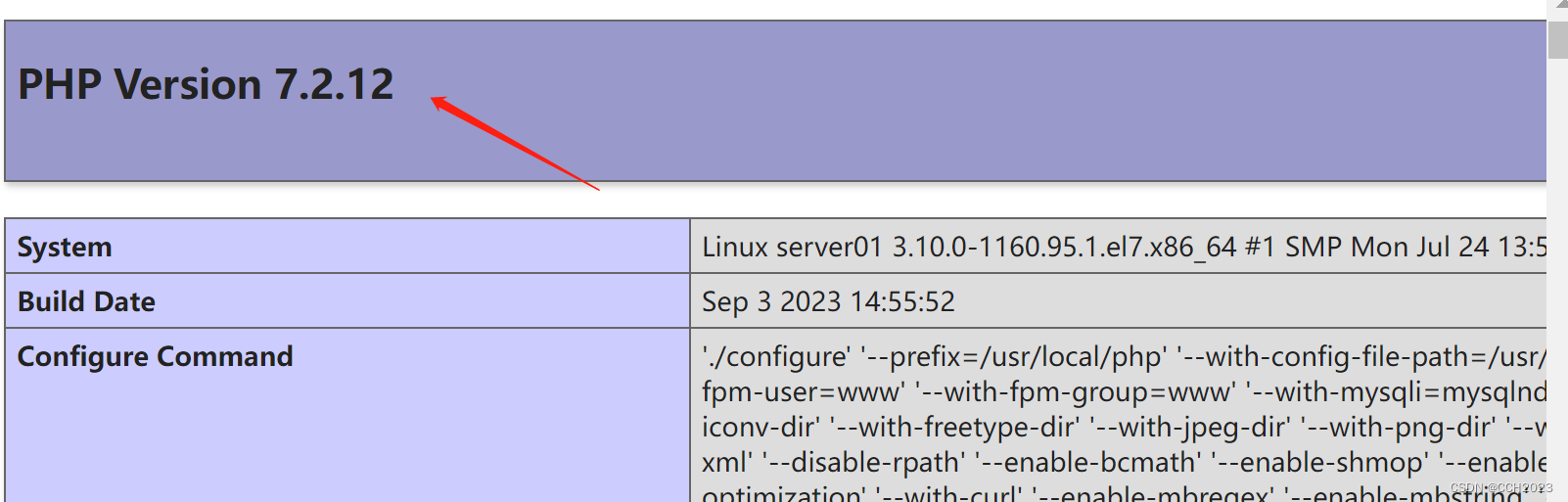

初始化配置

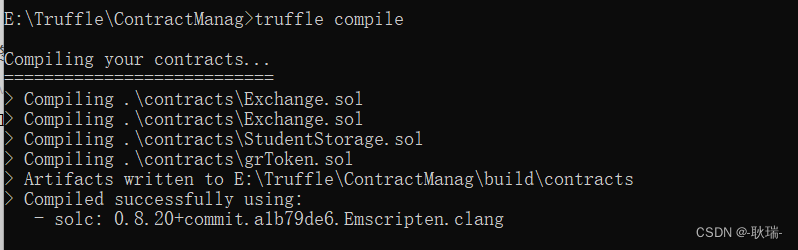

安装openai Python客户端,已经安装的需要升级它以获得新的函数调用功能

pip3 install openai

pip3 install openai --upgrade

# Importing the necessary Python libraries

import os

import json

import yaml

import openai

结合Azure Openai 的内容,将 API 的设置按照 Azure 给的方式结合文件(放 key),同时准备一个about-me.txt

#../keys/openai-keys.yaml

API_KEY: 06332xxxxxxcd4e70bxxxxxx6ee135

#../data/about-me.txt

Hello! My name is Enigma Zhao. I am an Azure cloud engineer at Microsoft. I enjoy learning about AI and teaching what I learn back to others. I have two sons and a daughter. I drive a Audi A3, and my favorite video game series is The Legend of Zelda.

openai.api_version = "2023-07-01-preview"

openai.api_type = "azure"

openai.api_base = "https://aoaifr01.openai.azure.com/"

# Loading the API key and organization ID from file (NOT pushed to GitHub)

with open('../keys/openai-keys.yaml') as f:

keys_yaml = yaml.safe_load(f)

# Applying our API key

openai.api_key = keys_yaml['API_KEY']

os.environ['OPENAI_API_KEY'] = keys_yaml['API_KEY']

# Loading the "About Me" text from local file

with open('../data/about-me.txt', 'r') as f:

about_me = f.read()

测试Json 转换

在使用函数调用之前,先看一下如何使用提示工程和Regex生成一个结构JSON,在以后会使用到。

# Engineering a prompt to extract as much information from "About Me" as a JSON object

about_me_prompt = f'''

Please extract information as a JSON object. Please look for the following pieces of information.

Name

Job title

Company

Number of children as a single number

Car make

Car model

Favorite video game series

This is the body of text to extract the information from:

{about_me}

'''

# Getting the response back from ChatGPT (gpt-4)

openai_response = openai.ChatCompletion.create(

engine="gpt-4",

messages = [{'role': 'user', 'content': about_me_prompt}]

)

# Loading the response as a JSON object

json_response = json.loads(openai_response['choices'][0]['message']['content'])

print(json_response)

输出如下:

root@ubuntu0:~/python# python3 [fc1.py](http://fc1.py/)

{'Name': 'Enigma Zhao', 'Job title': 'Azure cloud engineer', 'Company': 'Microsoft', 'Number of children': 3, 'Car make': 'Audi', 'Car model': 'A3', 'Favorite video game series': 'The Legend of Zelda'}

简单的自定义函数

# Defining our initial extract_person_info function

def extract_person_info(name, job_title, num_children):

'''

Prints basic "About Me" information

Inputs:

name (str): Name of the person

job_title (str): Job title of the person

num_chilren (int): The number of children the parent has.

'''

print(f'This person\'s name is {name}. Their job title is {job_title}, and they have {num_children} children.')

# Defining how we want ChatGPT to call our custom functions

my_custom_functions = [

{

'name': 'extract_person_info',

'description': 'Get "About Me" information from the body of the input text',

'parameters': {

'type': 'object',

'properties': {

'name': {

'type': 'string',

'description': 'Name of the person'

},

'job_title': {

'type': 'string',

'description': 'Job title of the person'

},

'num_children': {

'type': 'integer',

'description': 'Number of children the person is a parent to'

}

}

}

}

]

openai_response = openai.ChatCompletion.create(

engine="gpt-4",

messages = [{'role': 'user', 'content': about_me}],

functions = my_custom_functions,

function_call = 'auto'

)

print(openai_response)

输出如下,OpenAI 讲 about_me 的内容根据 function 的格式进行梳理,调用 function 输出:

root@ubuntu0:~/python# python3 fc2.py

{

"id": "chatcmpl-7tYiDoEjPpzNw3tPo3ZDFdaHSbS5u",

"object": "chat.completion",

"created": 1693475829,

"model": "gpt-4",

"prompt_annotations": [

{

"prompt_index": 0,

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"choices": [

{

"index": 0,

"finish_reason": "function_call",

"message": {

"role": "assistant",

"function_call": {

"name": "extract_person_info",

"arguments": "{\n \"name\": \"Enigma Zhao\",\n \"job_title\": \"Azure cloud engineer\",\n \"num_children\": 3\n}"

}

},

"content_filter_results": {}

}

],

"usage": {

"completion_tokens": 36,

"prompt_tokens": 147,

"total_tokens": 183

}

}

在上面的示例中,自定义函数提取三个非常具体的信息位,通过传递自定义的“About Me”文本作为提示符来证明这可以成功地工作。

如果传入任何其他不包含该信息的提示,会发生什么?

在API客户端调用function_call中设置了一个参数,并将其设置为auto。这个参数实际上是在告诉ChatGPT在确定何时为自定义函数构建输出时使用它的最佳判断。

所以,当提交的提示符与任何自定义函数都不匹配时,会发生什么呢?

简单地说,它默认为典型的行为,就好像函数调用不存在一样。

我们用一个任意的提示来测试一下:“埃菲尔铁塔有多高?”,修改如下:

openai_response = openai.ChatCompletion.create(

engine="gpt-4",

messages = [{'role': 'user', 'content': 'How tall is the Eiffel Tower?'}],

functions = my_custom_functions,

function_call = 'auto'

)

print(openai_response)

输出结果

{

"id": "chatcmpl-7tYvdaiKaoiWW7NXz8QDeZZYEKnko",

"object": "chat.completion",

"created": 1693476661,

"model": "gpt-4",

"prompt_annotations": [

{

"prompt_index": 0,

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"choices": [

{

"index": 0,

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": "The Eiffel Tower is approximately 330 meters (1083 feet) tall."

},

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"usage": {

"completion_tokens": 18,

"prompt_tokens": 97,

"total_tokens": 115

}

}

多样本情况

现在让我们演示一下,当我们将3个不同的样本应用于所有自定义函数时会发生什么。

# Defining a function to extract only vehicle information

def extract_vehicle_info(vehicle_make, vehicle_model):

'''

Prints basic vehicle information

Inputs:

- vehicle_make (str): Make of the vehicle

- vehicle_model (str): Model of the vehicle

'''

print(f'Vehicle make: {vehicle_make}\nVehicle model: {vehicle_model}')

# Defining a function to extract all information provided in the original "About Me" prompt

def extract_all_info(name, job_title, num_children, vehicle_make, vehicle_model, company_name, favorite_vg_series):

'''

Prints the full "About Me" information

Inputs:

- name (str): Name of the person

- job_title (str): Job title of the person

- num_chilren (int): The number of children the parent has

- vehicle_make (str): Make of the vehicle

- vehicle_model (str): Model of the vehicle

- company_name (str): Name of the company the person works for

- favorite_vg_series (str): Person's favorite video game series.

'''

print(f'''

This person\'s name is {name}. Their job title is {job_title}, and they have {num_children} children.

They drive a {vehicle_make} {vehicle_model}.

They work for {company_name}.

Their favorite video game series is {favorite_vg_series}.

''')

# Defining how we want ChatGPT to call our custom functions

my_custom_functions = [

{

'name': 'extract_person_info',

'description': 'Get "About Me" information from the body of the input text',

'parameters': {

'type': 'object',

'properties': {

'name': {

'type': 'string',

'description': 'Name of the person'

},

'job_title': {

'type': 'string',

'description': 'Job title of the person'

},

'num_children': {

'type': 'integer',

'description': 'Number of children the person is a parent to'

}

}

}

},

{

'name': 'extract_vehicle_info',

'description': 'Extract the make and model of the person\'s car',

'parameters': {

'type': 'object',

'properties': {

'vehicle_make': {

'type': 'string',

'description': 'Make of the person\'s vehicle'

},

'vehicle_model': {

'type': 'string',

'description': 'Model of the person\'s vehicle'

}

}

}

},

{

'name': 'extract_all_info',

'description': 'Extract all information about a person including their vehicle make and model',

'parameters': {

'type': 'object',

'properties': {

'name': {

'type': 'string',

'description': 'Name of the person'

},

'job_title': {

'type': 'string',

'description': 'Job title of the person'

},

'num_children': {

'type': 'integer',

'description': 'Number of children the person is a parent to'

},

'vehicle_make': {

'type': 'string',

'description': 'Make of the person\'s vehicle'

},

'vehicle_model': {

'type': 'string',

'description': 'Model of the person\'s vehicle'

},

'company_name': {

'type': 'string',

'description': 'Name of the company the person works for'

},

'favorite_vg_series': {

'type': 'string',

'description': 'Name of the person\'s favorite video game series'

}

}

}

}

]

# Defining a list of samples

samples = [

str(about_me),

'My name is David Hundley. I am a principal machine learning engineer, and I have two daughters.',

'She drives a Kia Sportage.'

]

# Iterating over the three samples

for i, sample in enumerate(samples):

print(f'Sample #{i + 1}\'s results:')

# Getting the response back from ChatGPT (gpt-4)

openai_response = openai.ChatCompletion.create(

engine = 'gpt-4',

messages = [{'role': 'user', 'content': sample}],

functions = my_custom_functions,

function_call = 'auto'

)

# Printing the sample's response

print(openai_response)

输出如下:

root@ubuntu0:~/python# python3 [fc3.py](http://fc3.py/)

Sample #1's results:

{

"id": "chatcmpl-7tq3Nh7BSccfFAmT2psZcRR3hHpZ7",

"object": "chat.completion",

"created": 1693542489,

"model": "gpt-4",

"prompt_annotations": [

{

"prompt_index": 0,

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"choices": [

{

"index": 0,

"finish_reason": "function_call",

"message": {

"role": "assistant",

"function_call": {

"name": "extract_all_info",

"arguments": "{\n\"name\": \"Enigma Zhao\",\n\"job_title\": \"Azure cloud engineer\",\n\"num_children\": 3,\n\"vehicle_make\": \"Audi\",\n\"vehicle_model\": \"A3\",\n\"company_name\": \"Microsoft\",\n\"favorite_vg_series\": \"The Legend of Zelda\"\n}"

}

},

"content_filter_results": {}

}

],

"usage": {

"completion_tokens": 67,

"prompt_tokens": 320,

"total_tokens": 387

}

}

Sample #2's results:

{

"id": "chatcmpl-7tq3QUA3o1yixTLZoPtuWOfKA38ub",

"object": "chat.completion",

"created": 1693542492,

"model": "gpt-4",

"prompt_annotations": [

{

"prompt_index": 0,

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"choices": [

{

"index": 0,

"finish_reason": "function_call",

"message": {

"role": "assistant",

"function_call": {

"name": "extract_person_info",

"arguments": "{\n\"name\": \"David Hundley\",\n\"job_title\": \"principal machine learning engineer\",\n\"num_children\": 2\n}"

}

},

"content_filter_results": {}

}

],

"usage": {

"completion_tokens": 33,

"prompt_tokens": 282,

"total_tokens": 315

}

}

Sample #3's results:

{

"id": "chatcmpl-7tq3RxN3tCLKbPadWkGQguploKFw2",

"object": "chat.completion",

"created": 1693542493,

"model": "gpt-4",

"prompt_annotations": [

{

"prompt_index": 0,

"content_filter_results": {

"hate": {

"filtered": false,

"severity": "safe"

},

"self_harm": {

"filtered": false,

"severity": "safe"

},

"sexual": {

"filtered": false,

"severity": "safe"

},

"violence": {

"filtered": false,

"severity": "safe"

}

}

}

],

"choices": [

{

"index": 0,

"finish_reason": "function_call",

"message": {

"role": "assistant",

"function_call": {

"name": "extract_vehicle_info",

"arguments": "{\n \"vehicle_make\": \"Kia\",\n \"vehicle_model\": \"Sportage\"\n}"

}

},

"content_filter_results": {}

}

],

"usage": {

"completion_tokens": 27,

"prompt_tokens": 268,

"total_tokens": 295

}

}

对于每个相应的提示,ChatGPT选择了正确的自定义函数,可以特别注意到API的响应对象中function_call下的name值。

除了这是一种方便的方法来确定使用哪个函数的参数之外,我们还可以通过编程将实际的自定义Python函数映射到此值,以适当地选择运行正确的代码。

# Iterating over the three samples

for i, sample in enumerate(samples):

print(f'Sample #{i + 1}\'s results:')

# Getting the response back from ChatGPT (gpt-4)

openai_response = openai.ChatCompletion.create(

engine = 'gpt-4',

messages = [{'role': 'user', 'content': sample}],

functions = my_custom_functions,

function_call = 'auto'

)['choices'][0]['message']

# Checking to see that a function call was invoked

if openai_response.get('function_call'):

# Checking to see which specific function call was invoked

function_called = openai_response['function_call']['name']

# Extracting the arguments of the function call

function_args = json.loads(openai_response['function_call']['arguments'])

# Invoking the proper functions

if function_called == 'extract_person_info':

extract_person_info(*list(function_args.values()))

elif function_called == 'extract_vehicle_info':

extract_vehicle_info(*list(function_args.values()))

elif function_called == 'extract_all_info':

extract_all_info(*list(function_args.values()))

输出如下:

root@ubuntu0:~/python# python3 fc4.py

Sample #1's results:

This person's name is Enigma Zhao. Their job title is Azure cloud engineer, and they have 3 children.

They drive a Audi A3.

They work for Microsoft.

Their favorite video game series is The Legend of Zelda.

Sample #2's results:

This person's name is David Hundley. Their job title is principal machine learning engineer, and they have 2 children.

Sample #3's results:

Vehicle make: Kia

Vehicle model: Sportage

OpenAI Function Calling with LangChain

考虑到LangChain在生成式AI社区中的受欢迎程度,添加一些代码来展示如何在LangChain中使用这个功能。

注意在使用前要安装 LangChain

pip3 install LangChain

# Importing the LangChain objects

from langchain.chat_models import ChatOpenAI

from langchain.chains import LLMChain

from langchain.prompts.chat import ChatPromptTemplate

from langchain.chains.openai_functions import create_structured_output_chain

# Setting the proper instance of the OpenAI model

#llm = ChatOpenAI(model = 'gpt-3.5-turbo-0613')

**#model 格式发生改变,如果用ChatOpenAI(model = 'gpt-4'),系统会给警告

llm = ChatOpenAI(model_kwargs={'engine': 'gpt-4'})**

# Setting a LangChain ChatPromptTemplate

chat_prompt_template = ChatPromptTemplate.from_template('{my_prompt}')

# Setting the JSON schema for extracting vehicle information

langchain_json_schema = {

'name': 'extract_vehicle_info',

'description': 'Extract the make and model of the person\'s car',

'type': 'object',

'properties': {

'vehicle_make': {

'title': 'Vehicle Make',

'type': 'string',

'description': 'Make of the person\'s vehicle'

},

'vehicle_model': {

'title': 'Vehicle Model',

'type': 'string',

'description': 'Model of the person\'s vehicle'

}

}

}

# Defining the LangChain chain object for function calling

chain = create_structured_output_chain(output_schema = langchain_json_schema,

llm = llm,

prompt = chat_prompt_template)

# Getting results with a demo prompt

print(chain.run(my_prompt = about_me))

输出如下:

root@ubuntu0:~/python# python3 fc0.py

{'vehicle_make': 'Audi', 'vehicle_model': 'A3'}